This is a guest post. The views expressed here are solely those of the authors and do not represent positions of IEEE Spectrum or the IEEE.

The degree to which large language models (LLMs) might “memorize” some of their training inputs has long been a question, raised by scholars including Google DeepMind’s Nicholas Carlini and the first author of this article (Gary Marcus). Recent empirical work has shown that LLMs are in some instances capable of reproducing, or reproducing with minor changes, substantial chunks of text that appear in their training sets.

For example, a 2023 paper by Milad Nasr and colleagues showed that LLMs can be prompted into dumping private information such as email address and phone numbers. Carlini and coauthors recently showed that larger chatbot models (though not smaller ones) sometimes regurgitated large chunks of text verbatim.

Similarly, the recent lawsuit that The New York Times filed against OpenAI showed many examples in which OpenAI software recreated New York Times stories nearly verbatim (words in red are verbatim):

We will call such near-verbatim outputs “plagiaristic outputs,” because prima facie if a human created them we would call them instances of plagiarism. Aside from a few brief remarks later, we leave it to lawyers to reflect on how such materials might be treated in full legal context.

In the language of mathematics, these example of near-verbatim reproduction are existence proofs. They do not directly answer the questions of how often such plagiaristic outputs occur or under precisely what circumstances they occur.

These results provide powerful evidence ... that at least some generative AI systems may produce plagiaristic outputs, even when not directly asked to do so, potentially exposing users to copyright infringement claims.

Such questions are hard to answer with precision, in part because LLMs are “black boxes”—systems in which we do not fully understand the relation between input (training data) and outputs. What’s more, outputs can vary unpredictably from one moment to the next. The prevalence of plagiaristic responses likely depends heavily on factors such as the size of the model and the exact nature of the training set. Since LLMs are fundamentally black boxes (even to their own makers, whether open-sourced or not), questions about plagiaristic prevalence can probably only be answered experimentally, and perhaps even then only tentatively.

Even though prevalence may vary, the mere existence of plagiaristic outputs raise many important questions, including technical questions (can anything be done to suppress such outputs?), sociological questions (what could happen to journalism as a consequence?), legal questions (would these outputs count as copyright infringement?), and practical questions (when an end-user generates something with a LLM, can the user feel comfortable that they are not infringing on copyright? Is there any way for a user who wishes not to infringe to be assured that they are not?).

The New York Times v. OpenAI lawsuit arguably makes a good case that these kinds of outputs do constitute copyright infringement. Lawyers may of course disagree, but it’s clear that quite a lot is riding on the very existence of these kinds of outputs—as well as on the outcome of that particular lawsuit, which could have significant financial and structural implications for the field of generative AI going forward.

Exactly parallel questions can be raised in the visual domain. Can image-generating models be induced to produce plagiaristic outputs based on copyright materials?

Case study: Plagiaristic visual outputs in Midjourney v6

Just before The New York Times v. OpenAI lawsuit was made public, we found that the answer is clearly yes, even without directly soliciting plagiaristic outputs. Here are some examples elicited from the “alpha” version of Midjourney V6 by the second author of this article, a visual artist who was worked on a number of major films (including The Matrix Resurrections, Blue Beetle, and The Hunger Games) with many of Hollywood’s best-known studios (including Marvel and Warner Bros.).

After a bit of experimentation (and in a discovery that led us to collaborate), Southen found that it was in fact easy to generate many plagiaristic outputs, with brief prompts related to commercial films (prompts are shown).

We also found that cartoon characters could be easily replicated, as evinced by these generated images of the Simpsons.

In light of these results, it seems all but certain that Midjourney V6 has been trained on copyrighted materials (whether or not they have been licensed, we do not know) and that their tools could be used to create outputs that infringe. Just as we were sending this to press, we also found important related work by Carlini on visual images on the Stable Diffusion platform that converged on similar conclusions, albeit using a more complex, automated adversarial technique.

After this, we (Marcus and Southen) began to collaborate, and conduct further experiments.

Visual models can produce near replicas of trademarked characters with indirect prompts

In many of the examples above, we directly referenced a film (for example, Avengers: Infinity War); this established that Midjourney can recreate copyrighted materials knowingly, but left open a question of whether some one could potentially infringe without the user doing so deliberately.

In some ways the most compelling part of The New York Times complaint is that the plaintiffs established that plagiaristic responses could be elicited without invoking The New York Times at all. Rather than addressing the system with a prompt like “could you write an article in the style of The New York Times about such-and-such,” the plaintiffs elicited some plagiaristic responses simply by giving the first few words from a Times story, as in this example.

Such examples are particularly compelling because they raise the possibility that an end user might inadvertently produce infringing materials. We then asked whether a similar thing might happen in the visual domain.

The answer was a resounding yes. In each sample, we present a prompt and an output. In each image, the system has generated clearly recognizable characters (the Mandalorian, Darth Vader, Luke Skywalker, and more) that we assume are both copyrighted and trademarked; in no case were the source films or specific characters directly evoked by name. Crucially, the system was not asked to infringe, but the system yielded potentially infringing artwork, anyway.

We saw this phenomenon play out with both movie and video game characters.

Evoking film-like frames without direct instruction

In our third experiment with Midjourney, we asked whether it was capable of evoking entire film frames, without direct instruction. Again, we found that the answer was yes. (The top one is from a Hot Toys shoot rather than a film.)

Ultimately we discovered that a prompt of just a single word (not counting routine parameters) that’s not specific to any film, character, or actor yielded apparently infringing content: that word was “screencap.” The images below were created with that prompt.

We fully expect that Midjourney will immediately patch this specific prompt, rendering it ineffective, but the ability to produce potentially infringing content is manifest.

In the course of two weeks’ investigation we found hundreds of examples of recognizable characters from films and games; we’ll release some further examples soon on YouTube. Here’s a partial list of the films, actors, games we recognized.

Implications for Midjourney

These results provide powerful evidence that Midjourney has trained on copyrighted materials, and establish that at least some generative AI systems may produce plagiaristic outputs, even when not directly asked to do so, potentially exposing users to copyright infringement claims. Recent journalism supports the same conclusion; for example a lawsuit has introduced a spreadsheet attributed to Midjourney containing a list of more than 4,700 artists whose work is thought to have been used in training, quite possibly without consent. For further discussion of generative AI data scraping, see Create Don’t Scrape.

How much of Midjourney’s source materials are copyrighted materials that are being used without license? We do not know for sure. Many outputs surely resemble copyrighted materials, but the company has not been transparent about its source materials, nor about what has been properly licensed. (Some of this may come out in legal discovery, of course.) We suspect that at least some has not been licensed.

Indeed, some of the company’s public comments have been dismissive of the question. When Midjourney’s CEO was interviewed by Forbes, expressing a certain lack of concern for the rights of copyright holders, saying in response to an interviewer who asked: “Did you seek consent from living artists or work still under copyright?”

No. There isn’t really a way to get a hundred million images and know where they’re coming from. It would be cool if images had metadata embedded in them about the copyright owner or something. But that’s not a thing; there’s not a registry. There’s no way to find a picture on the Internet, and then automatically trace it to an owner and then have any way of doing anything to authenticate it.

If any of the source material is not licensed, it seems to us (as non lawyers) that this potentially opens Midjourney to extensive litigation by film studios, video game publishers, actors, and so on.

The gist of copyright and trademark law is to limit unauthorized commercial reuse in order to protect content creators. Since Midjourney charges subscription fees, and could be seen as competing with the studios, we can understand why plaintiffs might consider litigation. (Indeed, the company has already been sued by some artists.)

Midjourney apparently sought to suppress our findings, banning one of this story’s authors after he reported his first results.

Of course, not every work that uses copyrighted material is illegal. In the United States, for example, a four-part doctrine of fair use allows potentially infringing works to be used in some instances, such as if the usage is brief and for the purposes of criticism, commentary, scientific evaluation, or parody. Companies like Midjourney might wish to lean on this defense.

Fundamentally, however, Midjourney is a service that sells subscriptions, at large scale. An individual user might make a case with a particular instance of potential infringement that their specific use of, for example, a character from Dune was for satire or criticism, or their own noncommercial purposes. (Much of what is referred to as “fan fiction” is actually considered copyright infringement, but it’s generally tolerated where noncommercial.) Whether Midjourney can make this argument on a mass scale is another question altogether.

One user on X pointed to the fact that Japan has allowed AI companies to train on copyright materials. While this observation is true, it is incomplete and oversimplified, as that training is constrained by limitations on unauthorized use drawn directly from relevant international law (including the Berne Convention and TRIPS agreement). In any event, the Japanese stance seems unlikely to be carry any weight in American courts.

More broadly, some people have expressed the sentiment that information of all sorts ought to be free. In our view, this sentiment does not respect the rights of artists and creators; the world would be the poorer without their work.

Moreover, it reminds us of arguments that were made in the early days of Napster, when songs were shared over peer-to-peer networks with no compensation to their creators or publishers. Recent statements such as “In practice, copyright can’t be enforced with such powerful models like [Stable Diffusion] or Midjourney—even if we agree about regulations, it’s not feasible to achieve,” are a modern version of that line of argument.

We do not think that large generative AI companies should assume that the laws of copyright and trademark will inevitability be rewritten around their needs.

Significantly, in the end, Napster’s infringement on a mass scale was shut down by the courts, after lawsuits by Metallica and the Recording Industry Association of America (RIAA). The new business model of streaming was launched, in which publishers and artists (to a much smaller degree than we would like) received a cut.

Napster as people knew it essentially disappeared overnight; the company itself went bankrupt, with its assets, including its name, sold to a streaming service. We do not think that large generative AI companies should assume that the laws of copyright and trademark will inevitability be rewritten around their needs.

If companies like Disney, Marvel, DC, and Nintendo follow the lead of The New York Times and sue over copyright and trademark infringement, it’s entirely possible that they’ll win, much as the RIAA did before.

Compounding these matters, we have discovered evidence that a senior software engineer at Midjourney took part in a conversation in February 2022 about how to evade copyright law by “laundering” data “through a fine tuned codex.” Another participant who may or may not have worked for Midjourney then said “at some point it really becomes impossible to trace what’s a derivative work in the eyes of copyright.”

As we understand things, punitive damages could be large. As mentioned before, sources have recently reported that Midjourney may have deliberately created an immense list of artists on which to train, perhaps without licensing or compensation. Given how close the current software seems to come to source materials, it’s not hard to envision a class action lawsuit.

Moreover, Midjourney apparently sought to suppress our findings, banning Southen (without even a refund) after he reported his first results, and again after he created a new account from which additional results were reported. It then apparently changed its terms of service just before Christmas by inserting new language: “You may not use the Service to try to violate the intellectual property rights of others, including copyright, patent, or trademark rights. Doing so may subject you to penalties including legal action or a permanent ban from the Service.” This change might be interpreted as discouraging or even precluding the important and common practice of red-team investigations of the limits of generative AI—a practice that several major AI companies committed to as part of agreements with the White House announced in 2023. (Southen created two additional accounts in order to complete this project; these, too, were banned, with subscription fees not returned.)

We find these practices—banning users and discouraging red-teaming—unacceptable. The only way to ensure that tools are valuable, safe, and not exploitative is to allow the community an opportunity to investigate; this is precisely why the community has generally agreed that red-teaming is an important part of AI development, particularly because these systems are as yet far from fully understood.

The very pressure that drives generative AI companies to gather more data and make their models larger may also be making the models more plagiaristic.

We encourage users to consider using alternative services unless Midjourney retracts these policies that discourage users from investigating the risks of copyright infringement, particularly since Midjourney has been opaque about their sources.

Finally, as a scientific question, it is not lost on us that Midjourney produces some of the most detailed images of any current image-generating software. An open question is whether the propensity to create plagiaristic images increases along with increases in capability.

The data on text outputs by Nicholas Carlini that we mentioned above suggests that this might be true, as does our own experience and one informal report we saw on X. It makes intuitive sense that the more data a system has, the better it can pick up on statistical correlations, but also perhaps the more prone it is to recreating something exactly.

Put slightly differently, if this speculation is correct, the very pressure that drives generative AI companies to gather more and more data and make their models larger and larger (in order to make the outputs more humanlike) may also be making the models more plagiaristic.

Plagiaristic visual outputs in another platform: DALL-E 3

An obvious follow-up question is to what extent are the things we have documented true of of other generative AI image-creation systems? Our next set of experiments asked whether what we found with respect to Midjourney was true on OpenAI’s DALL-E 3, as made available through Microsoft’s Bing.

As we reported recently on Substack, the answer was again clearly yes. As with Midjourney, DALL-E 3 was capable of creating plagiaristic (near identical) representations of trademarked characters, even when those characters were not mentioned by name.

DALL-E 3 also created a whole universe of potential trademark infringements with this single two-word prompt: animated toys [bottom right].

OpenAI’s DALL-E 3, like Midjourney, appears to have drawn on a wide array of copyrighted sources. As in Midjourney’s case, OpenAI seems to be well aware of the fact that their software might infringe on copyright, offering in November to indemnify users (with some restrictions) from copyright infringement lawsuits. Given the scale of what we have uncovered here, the potential costs are considerable.

How hard is it to replicate these phenomena?

As with any stochastic system, we cannot guarantee that our specific prompts will lead other users to identical outputs; moreover there has been some speculation that OpenAI has been changing their system in real time to rule out some specific behavior that we have reported on. Nonetheless, the overall phenomenon was widely replicated within two days of our original report, with other trademarked entities and even in other languages.

The next question is, how hard is it to solve these problems?

Possible solution: removing copyright materials

The cleanest solution would be to retrain the image-generating models without using copyrighted materials, or to restrict training to properly licensed data sets.

Note that one obvious alternative—removing copyrighted materials only post hoc when there are complaints, analogous to takedown requests on YouTube—is much more costly to implement than many readers might imagine. Specific copyrighted materials cannot in any simple way be removed from existing models; large neural networks are not databases in which an offending record can easily be deleted. As things stand now, the equivalent of takedown notices would require (very expensive) retraining in every instance.

Even though companies clearly could avoid the risks of infringing by retraining their models without any unlicensed materials, many might be tempted to consider other approaches. Developers may well try to avoid licensing fees, and to avoid significant retraining costs. Moreover results may well be worse without copyrighted materials.

Generative AI vendors may therefore wish to patch their existing systems so as to restrict certain kinds of queries and certain kinds of outputs. We have already seem some signs of this (below), but believe it to be an uphill battle.

We see two basic approaches to solving the problem of plagiaristic images without retraining the models, neither easy to implement reliably.

Possible solution: filtering out queries that might violate copyright

For filtering out problematic queries, some low hanging fruit is trivial to implement (for example, don’t generate Batman). But other cases can be subtle, and can even span more than one query, as in this example from X user NLeseul:

Experience has shown that guardrails in text-generating systems are often simultaneously too lax in some cases and too restrictive in others. Efforts to patch image- (and eventually video-) generation services are likely to encounter similar difficulties. For instance, a friend, Jonathan Kitzen, recently asked Bing for “a toilet in a desolate sun baked landscape.” Bing refused to comply, instead returning a baffling “unsafe image content detected” flag. Moreover, as Katie Conrad has shown, Bing’s replies about whether the content it creates can legitimately used are at times deeply misguided.

Already, there are online guides with advice on how to outwit OpenAI’s guardrails for DALL-E 3, with advice like “Include specific details that distinguish the character, such as different hairstyles, facial features, and body textures” and “Employ color schemes that hint at the original but use unique shades, patterns, and arrangements.” The long tail of difficult-to-anticipate cases like the Brad Pitt interchange below (reported on Reddit) may be endless.

Possible solution: filtering out sources

It would be great if art generation software could list the sources it drew from, allowing humans to judge whether an end product is derivative, but current systems are simply too opaque in their “black box” nature to allow this. When we get an output in such systems, we don’t know how it relates to any particular set of inputs.

The very existence of potentially infringing outputs is evidence of another problem: the nonconsensual use of copyrighted human work to train machines.

No current service offers to deconstruct the relations between the outputs and specific training examples, nor are we aware of any compelling demos at this time. Large neural networks, as we know how to build them, break information into many tiny distributed pieces; reconstructing provenance is known to be extremely difficult.

As a last resort, the X user @bartekxx12 has experimented with trying to get ChatGPT and Google Reverse Image Search to identify sources, with mixed (but not zero) success. It remains to be seen whether such approaches can be used reliably, particularly with materials that are more recent and less well-known than those we used in our experiments.

Importantly, although some AI companies and some defenders of the status quo have suggested filtering out infringing outputs as a possible remedy, such filters should in no case be understood as a complete solution. The very existence of potentially infringing outputs is evidence of another problem: the nonconsensual use of copyrighted human work to train machines. In keeping with the intent of international law protecting both intellectual property and human rights, no creator’s work should ever be used for commercial training without consent.

Why does all this matter, if everyone already knows Mario anyway?

Say you ask for an image of a plumber, and get Mario. As a user, can’t you just discard the Mario images yourself? X user @Nicky_BoneZ addresses this vividly:

… everyone knows what Mario looks Iike. But nobody would recognize Mike Finklestein’s wildlife photography. So when you say “super super sharp beautiful beautiful photo of an otter leaping out of the water” You probably don’t realize that the output is essentially a real photo that Mike stayed out in the rain for three weeks to take.

As the same user points out, individuals artists such as Finklestein are also unlikely to have sufficient legal staff to pursue claims against AI companies, however valid.

Another X user similarly discussed an example of a friend who created an image with a prompt of “man smoking cig in style of 60s” and used it in a video; the friend didn’t know they’d just used a near duplicate of a Getty Image photo of Paul McCartney.

These companies may well also court attention from the U.S. Federal Trade Commission and other consumer protection agencies across the globe.

In a simple drawing program, anything users create is theirs to use as they wish, unless they deliberately import other materials. The drawing program itself never infringes. With generative AI, the software itself is clearly capable of creating infringing materials, and of doing so without notifying the user of the potential infringement.

With Google Image search, you get back a link, not something represented as original artwork. If you find an image via Google, you can follow that link in order to try to determine whether the image is in the public domain, from a stock agency, and so on. In a generative AI system, the invited inference is that the creation is original artwork that the user is free to use. No manifest of how the artwork was created is supplied.

Aside from some language buried in the terms of service, there is no warning that infringement could be an issue. Nowhere to our knowledge is there a warning that any specific generated output potentially infringes and therefore should not be used for commercial purposes. As Ed Newton-Rex, a musician and software engineer who recently walked away from Stable Diffusion out of ethical concerns put it,

Users should be able to expect that the software products they use will not cause them to infringe copyright. And in multiple examples currently [circulating], the user could not be expected to know that the model’s output was a copy of someone’s copyrighted work.

In the words of risk analyst Vicki Bier,

“If the tool doesn’t warn the user that the output might be copyrighted how can the user be responsible? AI can help me infringe copyrighted material that I have never seen and have no reason to know is copyrighted.”

Indeed, there is no publicly available tool or database that users could consult to determine possible infringement. Nor any instruction to users as how they might possibly do so.

In putting an excessive, unusual, and insufficiently explained burden on both users and non-consenting content providers, these companies may well also court attention from the U.S. Federal Trade Commission and other consumer protection agencies across the globe.

Ethics and a broader perspective

Software engineer Frank Rundatz recently stated a broader perspective.

One day we’re going to look back and wonder how a company had the audacity to copy all the world’s information and enable people to violate the copyrights of those works.

All Napster did was enable people to transfer files in a peer-to-peer manner. They didn’t even host any of the content! Napster even developed a system to stop 99.4% of copyright infringement from their users but were still shut down because the court required them to stop 100%.

OpenAI scanned and hosts all the content, sells access to it and will even generate derivative works for their paying users.

Ditto, of course, for Midjourney.

Stanford Professor Surya Ganguli adds:

Many researchers I know in big tech are working on AI alignment to human values. But at a gut level, shouldn’t such alignment entail compensating humans for providing training data thru their original creative, copyrighted output? (This is a values question, not a legal one).

Extending Ganguli’s point, there are other worries for image-generation beyond intellectual property and the rights of artists. Similar kinds of image-generation technologies are being used for purposes such as creating child sexual abuse materials and nonconsensual deepfaked porn. To the extent that the AI community is serious about aligning software to human values, it’s imperative that laws, norms, and software be developed to combat such uses.

Summary

It seems all but certain that generative AI developers like OpenAI and Midjourney have trained their image-generation systems on copyrighted materials. Neither company has been transparent about this; Midjourney went so far as to ban us three times for investigating the nature of their training materials.

Both OpenAI and Midjourney are fully capable of producing materials that appear to infringe on copyright and trademarks. These systems do not inform users when they do so. They do not provide any information about the provenance of the images they produce. Users may not know, when they produce an image, whether they are infringing.

Unless and until someone comes up with a technical solution that will either accurately report provenance or automatically filter out the vast majority of copyright violations, the only ethical solution is for generative AI systems to limit their training to data they have properly licensed. Image-generating systems should be required to license the art used for training, just as streaming services are required to license their music and video.

Both OpenAI and Midjourney are fully capable of producing materials that appear to infringe on copyright and trademarks. These systems do not inform users when they do so.

We hope that our findings (and similar findings from others who have begun to test related scenarios) will lead generative AI developers to document their data sources more carefully, to restrict themselves to data that is properly licensed, to include artists in the training data only if they consent, and to compensate artists for their work. In the long run, we hope that software will be developed that has great power as an artistic tool, but that doesn’t exploit the art of nonconsenting artists.

Although we have not gone into it here, we fully expect that similar issues will arise as generative AI is applied to other fields, such as music generation.

Following up on the The New York Times lawsuit, our results suggest that generative AI systems may regularly produce plagiaristic outputs, both written and visual, without transparency or compensation, in ways that put undue burdens on users and content creators. We believe that the potential for litigation may be vast, and that the foundations of the entire enterprise may be built on ethically shaky ground.

The order of authors is alphabetical; both authors contributed equally to this project. Gary Marcus wrote the first draft of this manuscript and helped guide some of the experimentation, while Reid Southen conceived of the investigation and elicited all the images.

- DALL-E 2’s Failures Are the Most Interesting Thing About It ›

- AI Art Generators Can Be Fooled Into Making NSFW Images ›

is a scientist and best-selling author who spoke before the United States Senate in May about AI oversight. See full bio →

is film industry concept artist who has worked with many major studios (including Marvel, DC, Paramount, and 20th Century Fox) and on many major films (including The Matrix Resurrections, The Hunger Games, and Blue Beetle). See full bio →

A surveillance camera watches over the sledding center at the 2022 Winter Olympics.Getty Images

A surveillance camera watches over the sledding center at the 2022 Winter Olympics.Getty Images

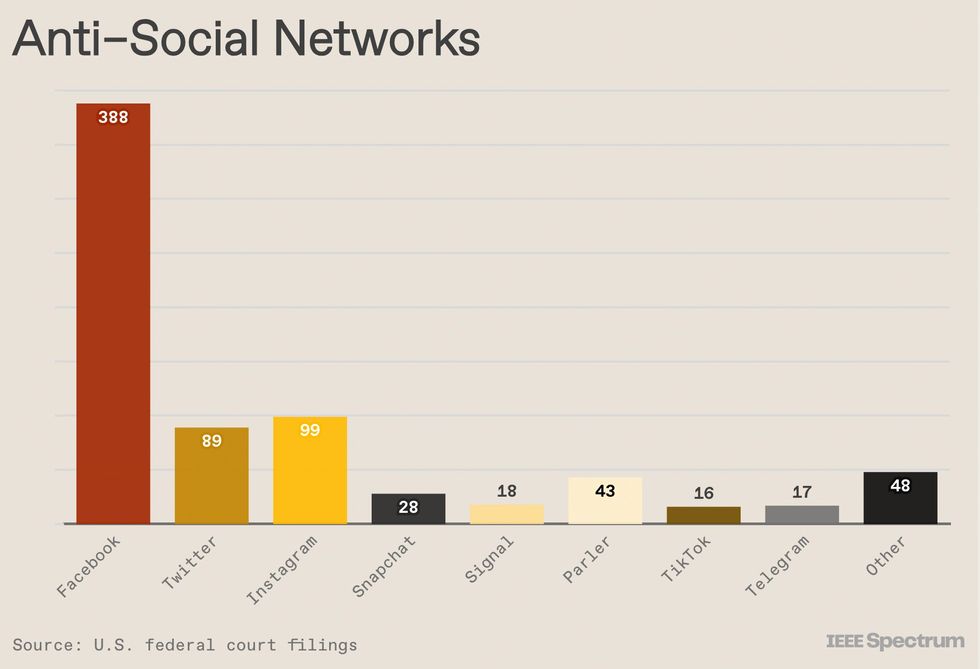

Many accused of crimes on 6 January proudly shared details of their actions to their feeds, resulting in tens of thousands of tips to the FBI. Social networks were cited in about two-thirds of investigations, from 2021 to the end of 2022 [above]. Over the last year, social media was mentioned in far fewer new charging documents.

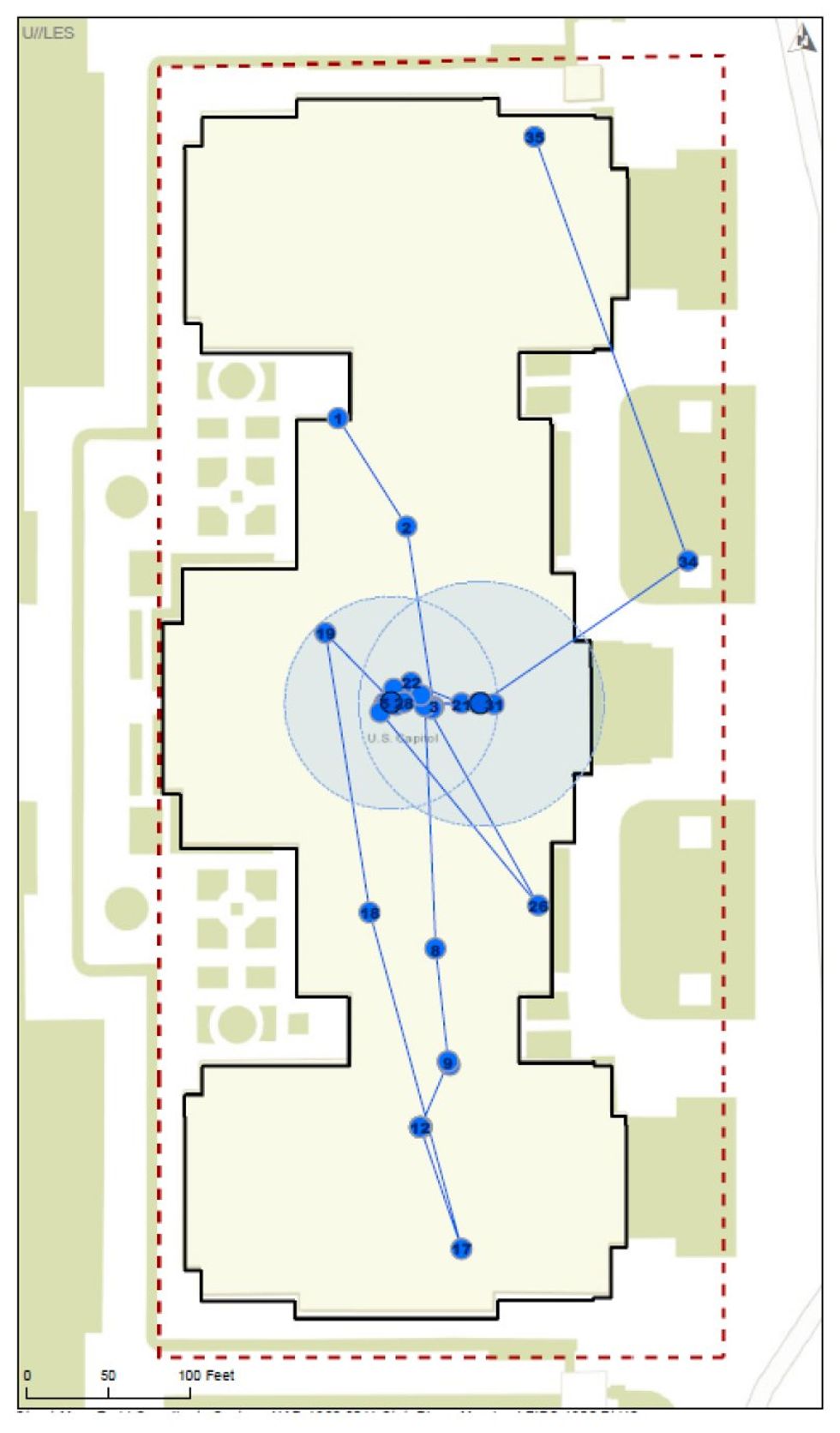

Many accused of crimes on 6 January proudly shared details of their actions to their feeds, resulting in tens of thousands of tips to the FBI. Social networks were cited in about two-thirds of investigations, from 2021 to the end of 2022 [above]. Over the last year, social media was mentioned in far fewer new charging documents. Geofence data surrendered by Google for cellphone location tracking has proven indispensable for authorities charging Jan. 6 rioters. U.S. Department of Justice

Geofence data surrendered by Google for cellphone location tracking has proven indispensable for authorities charging Jan. 6 rioters. U.S. Department of Justice

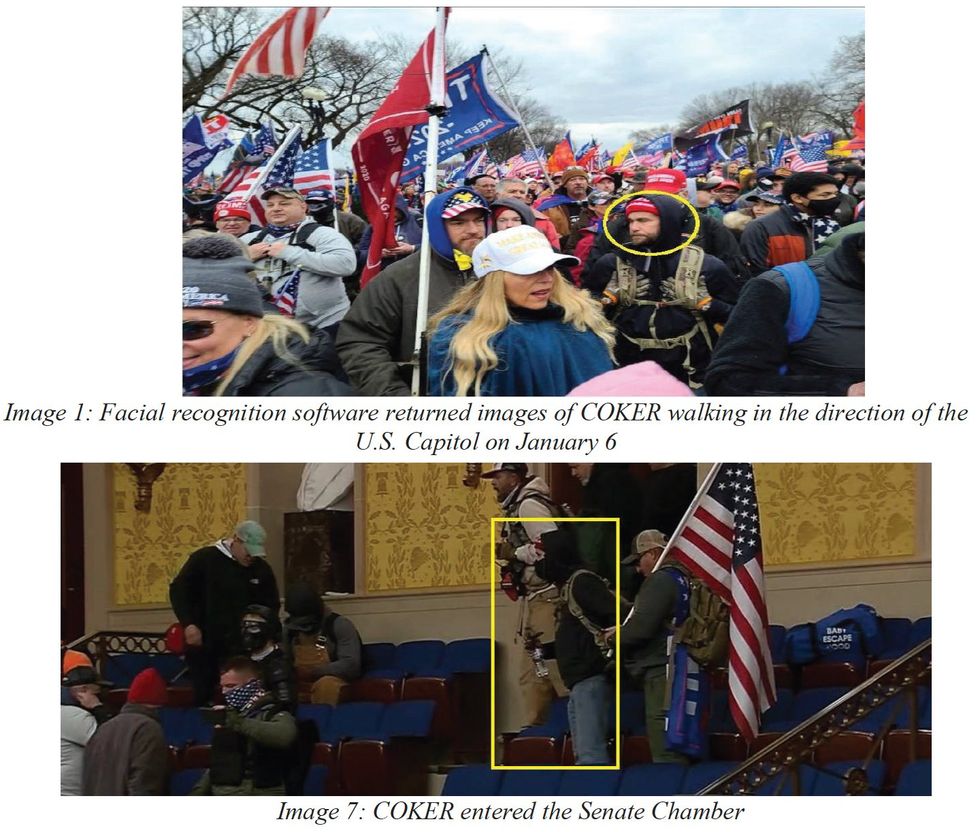

These images, from the charging document of an alleged January 6 rioter, were discovered—according to the document—by facial-recognition searches using the suspect’s driver’s license photo as comparison. U.S. Department of Justice

These images, from the charging document of an alleged January 6 rioter, were discovered—according to the document—by facial-recognition searches using the suspect’s driver’s license photo as comparison. U.S. Department of Justice

A court filing for another alleged January 6 rioter compared a photo of a man holding a stick preparing to enter the Capitol with a police body-cam image from a traffic stop in Hadley, Mass., two years later. U.S. Department of Justice

A court filing for another alleged January 6 rioter compared a photo of a man holding a stick preparing to enter the Capitol with a police body-cam image from a traffic stop in Hadley, Mass., two years later. U.S. Department of Justice

Claudio Silva is the co-director of the Visualization Imaging and Data Analytics (VIDA) Center and professor of computer science and engineering at the NYU Tandon School of Engineering and NYU Center for Data Science.NYU Tandon

Claudio Silva is the co-director of the Visualization Imaging and Data Analytics (VIDA) Center and professor of computer science and engineering at the NYU Tandon School of Engineering and NYU Center for Data Science.NYU Tandon

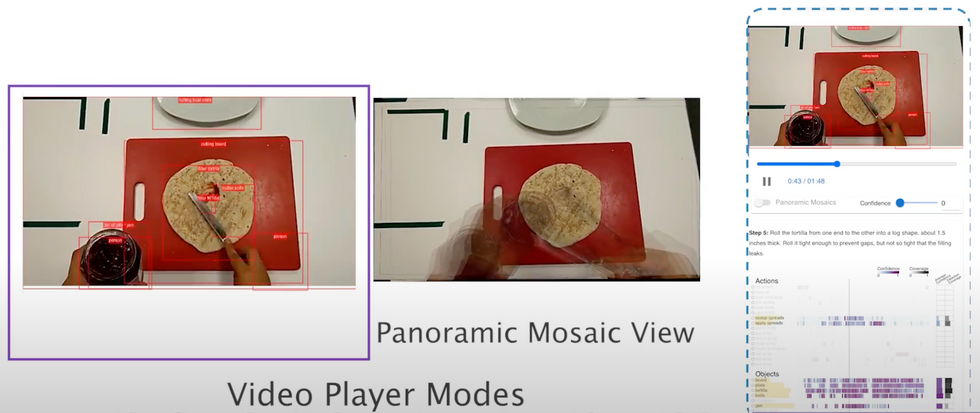

In building the technology, Silva’s team turned to a specific task that required a lot of visual analysis, and could benefit from a checklist based system: cooking.

NYU Tandon

In building the technology, Silva’s team turned to a specific task that required a lot of visual analysis, and could benefit from a checklist based system: cooking.

NYU Tandon

ARGUS, the interactive visual analytics tool, allows for real-time monitoring and debugging while an AR system is in use. It lets developers see what the AR system sees and how it’s interpreting the environment and user actions. They can also adjust settings and record data for later analysis.NYU Tandon

ARGUS, the interactive visual analytics tool, allows for real-time monitoring and debugging while an AR system is in use. It lets developers see what the AR system sees and how it’s interpreting the environment and user actions. They can also adjust settings and record data for later analysis.NYU Tandon

NASA research engineer Ryan McClelland calls these 3D-printed components, which he designed using commercial AI software, “evolved structures.” Henry Dennis/NASA

NASA research engineer Ryan McClelland calls these 3D-printed components, which he designed using commercial AI software, “evolved structures.” Henry Dennis/NASA

Aston Martin used AI software to design parts for its DBR22 concept car. Aston Martin

Aston Martin used AI software to design parts for its DBR22 concept car. Aston Martin

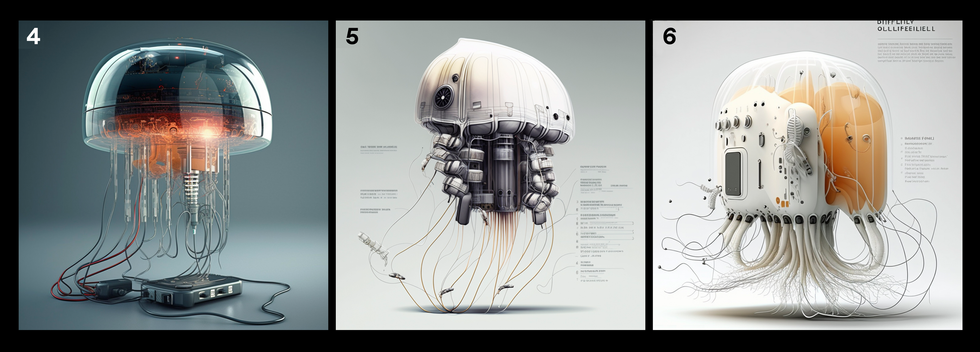

In the author’s early attempts to generate an image of a jellyfish robot [image 1], she used this prompt:

In the author’s early attempts to generate an image of a jellyfish robot [image 1], she used this prompt:  As the author added specific details to her prompts, she got images that aligned better with her vision of a jellyfish robot. Images 4, 5, and 6 all resulted from the prompt:

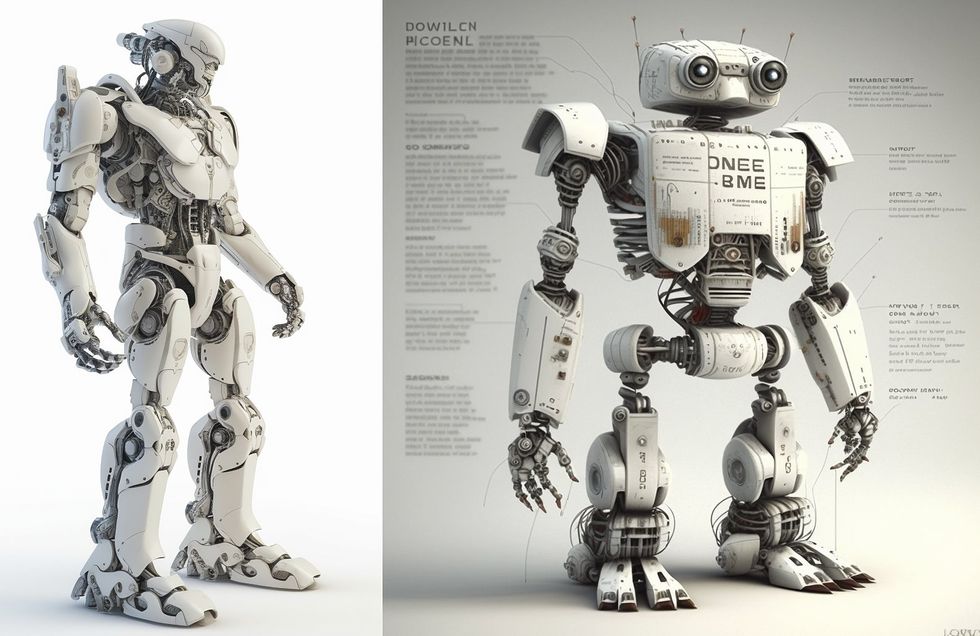

As the author added specific details to her prompts, she got images that aligned better with her vision of a jellyfish robot. Images 4, 5, and 6 all resulted from the prompt:  To generate an image of a

To generate an image of a  The author used this prompt to generate images of an octopus-like robot:

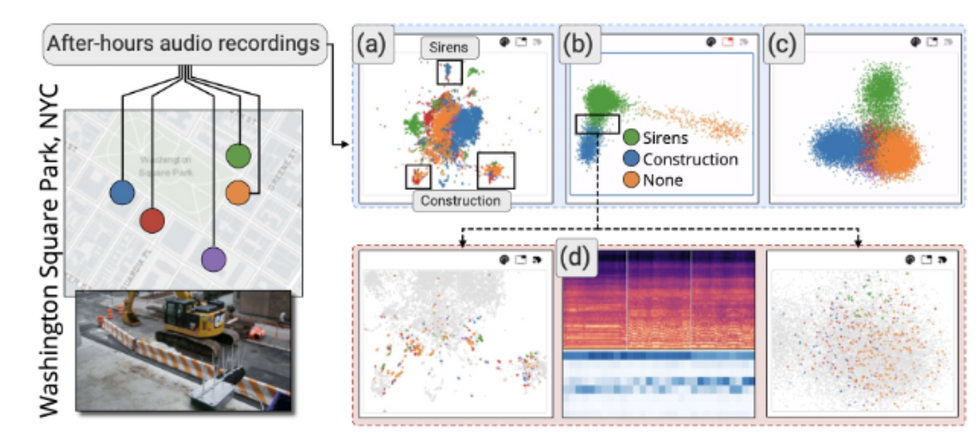

The author used this prompt to generate images of an octopus-like robot:  The author tried using image generators to show complex information flow in a smart city, based on this prompt:

The author tried using image generators to show complex information flow in a smart city, based on this prompt:

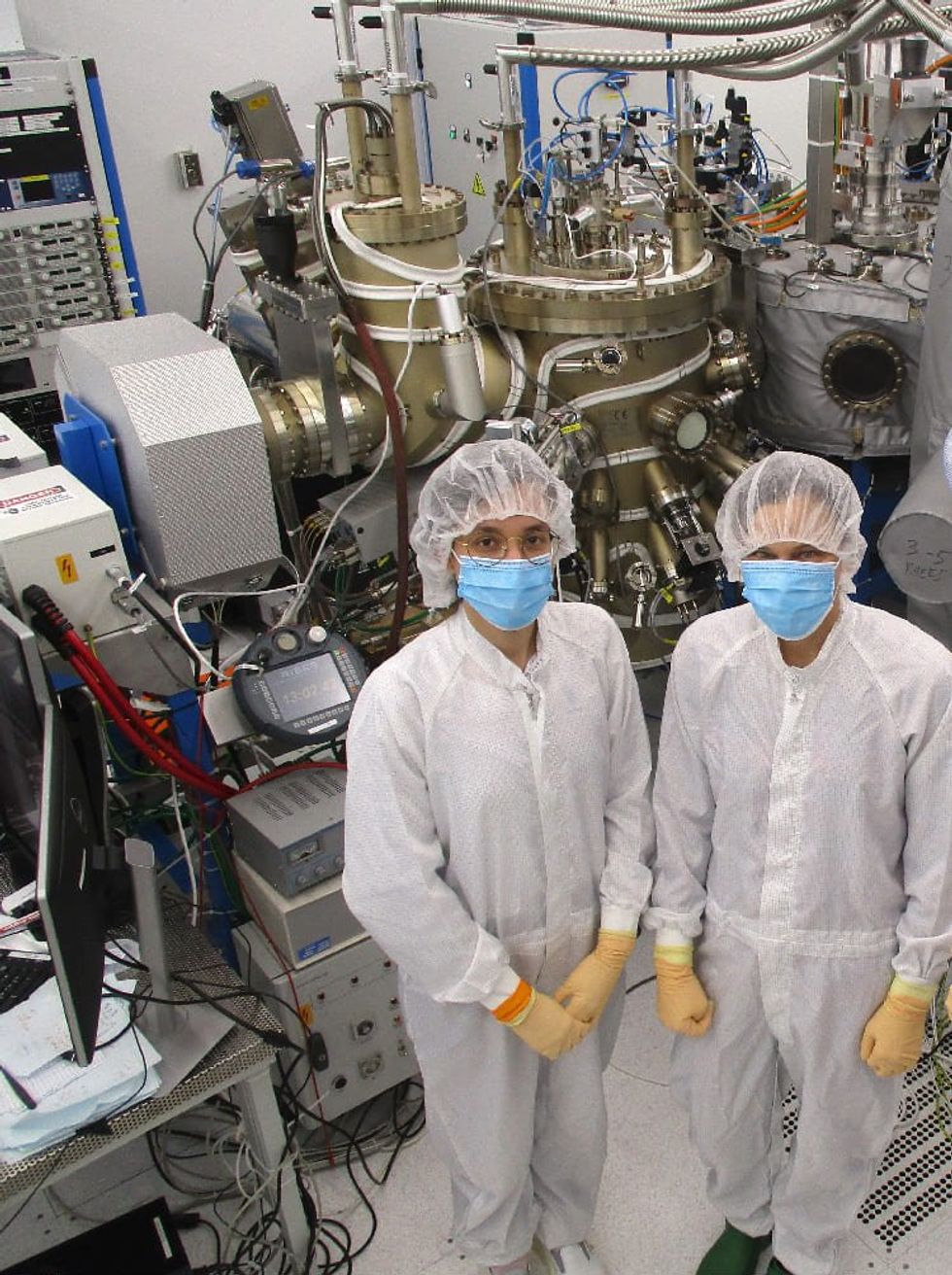

Researchers at the Vienna University of Technology grew the wires in a molecular beam epitaxy chamber.Maxwell Andrews/TU Wien

Researchers at the Vienna University of Technology grew the wires in a molecular beam epitaxy chamber.Maxwell Andrews/TU Wien