Zep: Fast, scalable building blocks for LLM apps

Production-grade chat history memory, vector search, data enrichment, and more.

Quick Start |

Documentation |

LangChain and

LlamaIndex Support |

Discord

www.getzep.com

What is Zep?

Zep is an open source platform for productionizing LLM apps. Go from a prototype built in LangChain or LlamaIndex, or a custom app, to production in minutes without rewriting code.

⭐️ Core Features

💬 Designed for building conversational LLM applications

- Manage users, sessions, chat messages, chat roles, and more, not just texts and embeddings.

- Build autopilots, agents, Q&A over docs apps, chatbots, and more.

🛠️ Use as drop-in replacements for LangChain or LlamaIndex components, or with a frameworkless app.

- Zep Memory and VectorStore implementations are shipped with LangChain, LangChain.js, and LlamaIndex.

- Python & TypeScript/JS SDKs for easy integration with your LLM app.

- TypeScript/JS SDK supports edge deployment.

🔎 Vector Database with Hybrid Search

- Populate your prompts with relevant documents and chat history.

- Rich metadata and JSONPath query filters offer a powerful hybrid search over texts.

🔋 Batteries Included Embedding & Enrichment

- Automatically embed texts and messages using state-of-the-art open source models, OpenAI, or bring your own vectors.

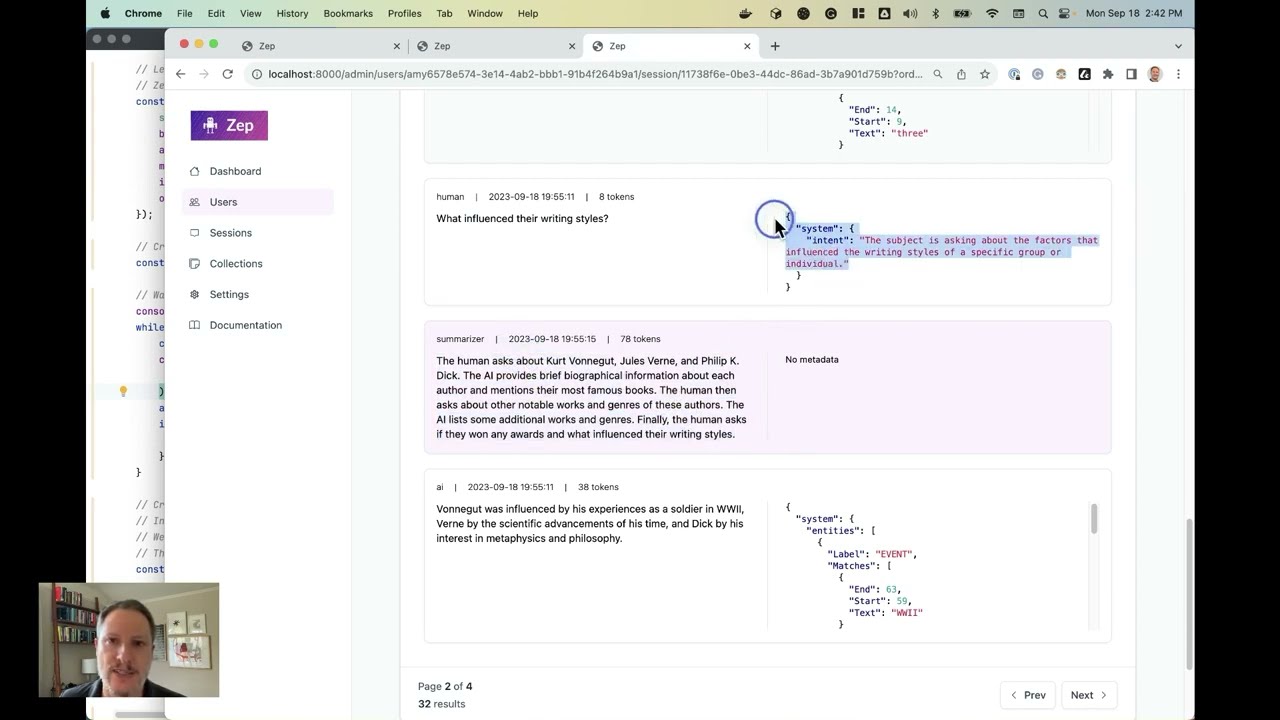

- Enrichment of chat histories with summaries, named entities, token counts. Use these as search filters.

- Associate your own metadata with sessions, documents & chat histories.

⚡️ Fast, scalable, low-latency APIs and stateless deployments

- Zep’s local embedding models and async enrichment ensure a snappy user experience.

- Storing documents and history in Zep and not in memory enables stateless deployment.

Learn more

- 🏎️ Quick Start Guide: Docker or cloud deployment, and coding, in < 5 minutes.

- 📚 Zep By Example: Learn how to use Zep by example.

- 🦙 Building Apps with LlamaIndex

- 🦜⛓️ Building Apps with LangChain

- 🛠️ Getting Started with TypeScript/JS or Python

Examples

Create Users, Chat Sessions, and Chat Messages (Zep Python SDK)

user_request = CreateUserRequest(

user_id=user_id,

email="user@example.com",

first_name="Jane",

last_name="Smith",

metadata={"foo": "bar"},

)

new_user = client.user.add(user_request)

# create a chat session

session_id = uuid.uuid4().hex # A new session identifier

session = Session(

session_id=session_id,

user_id=user_id,

metadata={"foo" : "bar"}

)

client.memory.add_session(session)

# Add a chat message to the session

history = [

{ role: "human", content: "Who was Octavia Butler?" },

]

messages = [Message(role=m.role, content=m.content) for m in history]

memory = Memory(messages=messages)

client.memory.add_memory(session_id, memory)

# Get all sessions for user_id

sessions = client.user.getSessions(user_id)Persist Chat History with LangChain.js (Zep TypeScript SDK)

const memory = new ZepMemory({

sessionId,

baseURL: zepApiURL,

apiKey: zepApiKey,

});

const chain = new ConversationChain({ llm: model, memory });

const response = await chain.run(

{

input="What is the book's relevance to the challenges facing contemporary society?"

},

);Hybrid similarity search over a document collection with text input and JSONPath filters (TypeScript)

const query = "Who was Octavia Butler?";

const searchResults = await collection.search({ text: query }, 3);

// Search for documents using both text and metadata

const metadataQuery = {

where: { jsonpath: '$[*] ? (@.genre == "scifi")' },

};

const newSearchResults = await collection.search(

{

text: query,

metadata: metadataQuery,

},

3

);Create a LlamaIndex Index using Zep as a VectorStore (Python)

from llama_index import VectorStoreIndex, SimpleDirectoryReader

from llama_index.vector_stores import ZepVectorStore

from llama_index.storage.storage_context import StorageContext

vector_store = ZepVectorStore(

api_url=zep_api_url,

api_key=zep_api_key,

collection_name=collection_name

)

documents = SimpleDirectoryReader("documents/").load_data()

storage_context = StorageContext.from_defaults(vector_store=vector_store)

index = VectorStoreIndex.from_documents(

documents,

storage_context=storage_context

)Search by embedding (Zep Python SDK)

# Search by embedding vector, rather than text query

# embedding is a list of floats

results = collection.search(

embedding=embedding, limit=5

)Get Started

Install Server

Please see the Zep Quick Start Guide for important configuration information.

docker compose upLooking for other deployment options?

Install SDK

Please see the Zep Develoment Guide for important beta information and usage instructions.

pip install zep-pythonor

npm i @getzep/zep-js